Upgrade to the latest version of Portworx Enterprise for continued support. Documentation for the latest version of Portworx Enterprise can be found here.

Anthos

Portworx has been certified with the following Anthos versions:

- 1.11, including Anthos on bare metal

- 1.12, including Anthos on bare metal

- 1.13, including Anthos on bare metal

- 1.14, including Anthos on bare metal

Architecture

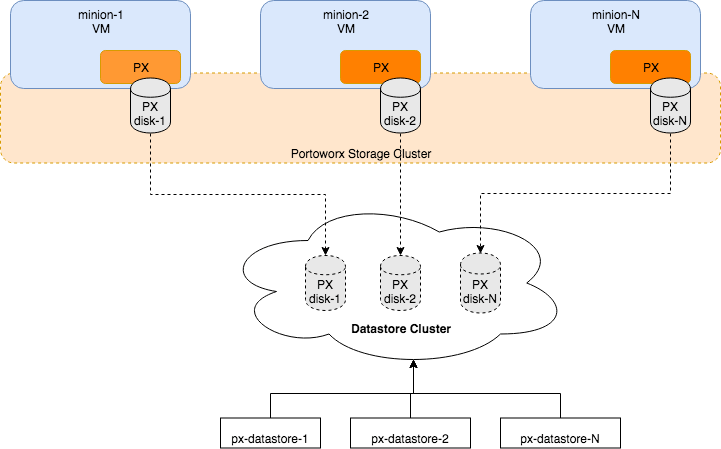

The following diagram gives an overview of the Portworx architecture on vSphere using shared datastores.

- Portworx runs on each Kubernetes minion/worker.

- Based on the given spec by the end user, Portworx on each node will create its disk on the configured shared datastores or datastore clusters.

- Portworx will aggregate all of the disks and form a single storage cluster. End users can carve PVCs (Persistent Volume Claims), PVs (Persistent Volumes) and Snapshots from this storage cluster.

- Portworx tracks and manages the disks that it creates. In a failure event, if a new VM spins up, Portworx on the new VM will be able to attach to the same disk that was previously created by the node on the failed VM.

Installation

This topic explains how to install Portworx with Kubernetes on Anthos.

NOTES:

- VMware vSphere v6.7u3 or newer is required.

- Run these steps from the anthos admin station or any other machine which has kubectl access to your cluster.

Step 1: vCenter user for Portworx

Provide Portworx with a vCenter server user that has the following minimum vSphere privileges using your vSphere console:

- Datastore

- Allocate space

- Browse datastore

- Low level file operations

- Remove file

- Host

- Local operations

- Reconfigure virtual machine

- Virtual machine

- Change Configuration

- Add existing disk

- Add new disk

- Add or remove device

- Advanced configuration

- Change Settings

- Extend virtual disk

- Modify device settings

- Remove disk

If you create a custom role as above, make sure to select Propagate to children when assigning the user to the role.

kubectl access.

Step 2: Create a Kubernetes secret with your vCenter user and password

Update the following items in the Secret template below to match your environment:

- VSPHERE_USER: Use output of

echo '<vcenter-server-user>' | base64 VSPHERE_PASSWORD: Use output of

echo '<vcenter-server-password>' | base64apiVersion: v1 kind: Secret metadata: name: px-vsphere-secret namespace: kube-system type: Opaque data: VSPHERE_USER: XXXX VSPHERE_PASSWORD: XXXX

kubectl apply the above spec after you update the above template with your user and password.

Step 3: Generate the specs

vSphere environment details

Export the following environment variables based on your vSphere environment. These variables will be used in a later step when generating the YAML spec.

# Hostname or IP of your vCenter server

export VSPHERE_VCENTER=myvcenter.net

# Prefix of your shared ESXi datastore(s) names. Portworx will use datastores who names match this prefix to create disks.

export VSPHERE_DATASTORE_PREFIX=mydatastore-

# Change this to the port number vSphere services are running on if you have changed the default port 443

export VSPHERE_VCENTER_PORT=443Disk templates

A disk template defines the VMDK properties that Portworx will use as a reference for creating the actual disks out of which Portworx will create the virtual volumes for your PVCs.

The template adheres to the following format:

type=<vmdk type>,size=<size of the vmdk>- type: Supported types are thin, zeroedthick, eagerzeroedthick, and lazyzeroedthick

- size: This is the size of the VMDK in GiB

The following example will create a 150GB zeroed thick vmdk on each VM:

export VSPHERE_DISK_TEMPLATE=type=zeroedthick,size=150Set max storage nodes

Anthos cluster management operations, such as upgrades, recycle cluster nodes by deleting and recreating them. During this process, the cluster momentarily scales up to more nodes than initially installed. For example, a 3-node cluster may increase to a 4-node cluster.

To prevent Portworx from creating storage on these additional nodes, you must cap the number of Portworx nodes that will act as storage nodes. You can do this by setting the MAX_NUMBER_OF_NODES_PER_ZONE environment variable according to the following requirements:

- If your Anthos cluster does not have zones configured, this number should be your initial number of cluster nodes

If your Anthos cluster has zones configured, this number should be initial number of cluster nodes per zone

export MAX_NUMBER_OF_NODES_PER_ZONE=3

Install the Portworx Operator

curl -fsL -o px-operator.yaml "https://install.portworx.com/?comp=pxoperator"Apply the Portworx Operator spec

kubectl apply -f px-operator.yamlSet the Portworx version

Set an environment variable to the latest major version of Portworx:

export PXVER=<portworx-version>Generate the spec file

Now generate the storage cluster spec with the following command:

curl -fsL -o px-spec.yaml "https://install.portworx.com/$PXVER?operator=true&gke=true&mz=$MAX_NUMBER_OF_NODES_PER_ZONE&csida=true&c=portworx-demo-cluster&b=true&st=k8s&csi=true&vsp=true&ds=$VSPHERE_DATASTORE_PREFIX&vc=$VSPHERE_VCENTER&s=%22$VSPHERE_DISK_TEMPLATE%22&misc=-rt_opts%20kvdb_failover_timeout_in_mins=25,wait-before-retry-period-in-secs=360"Apply specs

Apply the Operator and StorageCluster specs you generated in the section above using the kubectl apply command:

Deploy the Operator:

kubectl apply -f 'https://install.portworx.com/<version-number>?comp=pxoperator'serviceaccount/portworx-operator created podsecuritypolicy.policy/px-operator created clusterrole.rbac.authorization.k8s.io/portworx-operator created clusterrolebinding.rbac.authorization.k8s.io/portworx-operator created deployment.apps/portworx-operator createdDeploy the StorageCluster:

kubectl apply -f 'https://install.portworx.com/<version-number>?operator=true&mc=false&kbver=&b=true&kd=type%3Dgp2%2Csize%3D150&s=%22type%3Dgp2%2Csize%3D150%22&c=px-cluster-XXXX-XXXX&eks=true&stork=true&csi=true&mon=true&tel=false&st=k8s&e==AWS_ACCESS_KEY_ID%3XXXX%2CAWS_SECRET_ACCESS_KEY%3XXXX&promop=true'storagecluster.core.libopenstorage.org/px-cluster-0d8dad46-f9fd-4945-b4ac-8dfd338e915b created

Monitor the Portworx pods

Enter the following

kubectl getcommand, waiting until all Portworx pods show as ready in the output:kubectl get pods -o wide -n kube-system -l name=portworxEnter the following

kubectl describecommand with the ID of one of your Portworx pods to show the current installation status for individual nodes:kubectl -n kube-system describe pods <portworx-pod-id>Events: Type Reason Age From Message ---- ------ ---- ---- ------- Normal Scheduled 7m57s default-scheduler Successfully assigned kube-system/portworx-qxtw4 to k8s-node-2 Normal Pulling 7m55s kubelet, k8s-node-2 Pulling image "portworx/oci-monitor:2.5.0" Normal Pulled 7m54s kubelet, k8s-node-2 Successfully pulled image "portworx/oci-monitor:2.5.0" Normal Created 7m53s kubelet, k8s-node-2 Created container portworx Normal Started 7m51s kubelet, k8s-node-2 Started container portworx Normal PortworxMonitorImagePullInPrgress 7m48s portworx, k8s-node-2 Portworx image portworx/px-enterprise:2.5.0 pull and extraction in progress Warning NodeStateChange 5m26s portworx, k8s-node-2 Node is not in quorum. Waiting to connect to peer nodes on port 9002. Warning Unhealthy 5m15s (x15 over 7m35s) kubelet, k8s-node-2 Readiness probe failed: HTTP probe failed with statuscode: 503 Normal NodeStartSuccess 5m7s portworx, k8s-node-2 PX is ready on this nodeNOTE: In your output, the image pulled will differ based on your chosen Portworx license type and version.

Monitor the cluster status

Use the pxctl status command to display the status of your Portworx cluster:

PX_POD=$(kubectl get pods -l name=portworx -n kube-system -o jsonpath='{.items[0].metadata.name}')

kubectl exec $PX_POD -n kube-system -- /opt/pwx/bin/pxctl status